You are here

Appendix F

[Fleegello originally wrote his critique of octan physics as section 2.3 of the Principia. It is listed separately here, as it frankly stands apart, and interferes with the general flow of that work. Most scholars agree that Fleegello did not introduce any novel physics in this section. Rather he took ideas already advanced by contemporary physicists, and adapted them to his own philosophical framework. Editorial comments are enclosed in brackets throughout the text, to distinguish them from primary content.]

The physical (pan)universe has been identified as the Physical Consistency Subfield (PCS) of the Consistency Ideo Field (CIF) – the complete set of abstract mathematical objects that both define physical (temporospatial) relationships and are compatible with consistency logic. The PCS may itself be naturally divided into multiple, logically self-contained physical universes, characterized by distinct physical laws and conditions. Our own universe would be one of these worlds.

Mathematical objects in the PCS may manifest (in part) as states of a physical system, or as operators representing observables or other entities that act on those states. If modern physics is a guide, they incorporate a broad class of multidimensional objects known as dimensors [including common scalars, vectors, more general tensors, and spinors]. Many useful dimensor operators (in particular, those representing observables) define linear relations between other dimensors.

In standard Shrodiik [quantum] theory [named in honor of the pre-Dracian physicist Shrodo], a physical state (of any sufficiently isolated system) is denoted by a ket symbol |ψ>, where ψ is an arbitrary label. This entity is supposed to encompass all physical aspects of a system. |ψ> was originally interpreted in terms of the positions and motions of material particles at a time t in a pre-existing three-dimensional (3D) space x [here bold type indicates a physical 3D vector]. Observables then include the positions, energies and momenta of these particles.

Contemporary Shrodiik physics has a most peculiar feature. For any physical state |ψ>, only the probabilities for measuring different values of a given observable can be computed. Even granted complete knowledge of a physical system at a particular moment, the future course as seen by any octan observer cannot in general be predicted with certainty. Detailed prescriptions for computing probabilities may be found in Shrodiik physics texts.

For any physical system, there is a range of possible states. Related to its probabilistic character, |ψ> can consist of a linear combination, or superposition, of these available states. The selection of a set of fundamental basis states is then arbitrary, to some extent; any given set of basis states can be mixed into new combinations, to form distinct sets.

In general, |ψ> can be viewed as a vector in an abstract space that spans all the possible states. If the components of a state vector are defined with respect to a specified set of basis vectors, then the state may be represented by a single-column dimensor array. Linear operators may in turn be represented by square dimensor arrays that transform any given state (by the rules of matrix multiplication) into another state.

Let  represent a (linear) operator corresponding to an observable A. Here the symbol "hat" explicitly denotes operator, versus numeric parameter, status. When  is applied to an arbitrary state vector, the result is typically a linear combination of other state vectors. Suppose, however, that  is applied to a state vector |ψa> characterized by a well-defined value a of A – i.e., a state in which a measurement of A will definitely yield the value a. Then  acts on |ψa> by extracting this value:

Â|ψa> = a|ψa> .

This is what it means for  to represent an observable. Mathematically, |ψa> is an eigenstate of Â, with a well-defined value a of that observable.

From the perspective of a given observer, |ψ> evolves in a smooth manner until a measurement is made. Curiously, at this point |ψ> jumps discontinuously to an eigenstate corresponding to the measurement result. Performing a measurement (observation) abruptly reduces a system to an eigenstate of the observed quantity!

In bra-ket notation [physicists used this archaic script during Fleegello's era], the numeric overlap between two states |φ> and |ψ> is represented by <φ|ψ>, where the states are normalized such that <ψ|ψ>=1 for all |ψ>. The overlap value is a probability amplitude for starting with a system in state |ψ>, but observing it in state |φ>. The actual probability is the absolute square of this amplitude, or |<φ|ψ>|2. For example, consider a one-particle system. If |x> is the state with the particle at 3D position x, then <x|ψ> is the wavefunction ψ(t,x) of Shrodiik mechanics, and |ψ(t,x)|2 is the probability per 3D volume at time t for finding the particle at x. This is an absolute probability if ψ(t,x) is normalized such that the integral [a continuous sum over a volume of a function multiplied by an infinitesimal volume element] of |ψ|2 over x is unity.

The expectation value of an observable A, defined as the average value Aavg over repeated measurements on identical states |ψ>, is given by

Aavg = <ψ|Â|ψ> .

The observable A has a definite value a only if |ψ> is already an eigenstate |ψa> of Â.

In classical physics, material particles were treated as localized entities, distinct from waves (such as light) that propogate through underlying fields or media. Only waves could undergo self-interference, or diffract around obstacles. At the dawn of the Shrodiik revolution, ostensible particles were found to have wave properties, and nominal waves were found to sometimes act like classical particles. Observables that classically had a continuous range of values – e.g., the energy of an electron in an atom – might now be quantized, or restricted to discrete values.

Energy is one of the central observables in quantum physics. It is associated with the Hoobitean operator Ĥ [named for the classical physicist Hoobitu]. For material particles, Ĥ is often written as the sum of a kinetic energy term Ĥo plus a potential energy (interaction) term Ĥint. After wave-particle duality was discovered, the energy E of a (set of) particle(s) in an eigenstate of Ĥ became associated with a temporal frequency f, or angular frequency ω:

E = ℎ f = ℎ ω / 2𝜋 = ℏ ω ,

where ℎ is the minuscule but nonzero Planko constant [named for the pioneering physicist Planko], and ℏ is the reduced Planko constant. Conversely, experiments showed that the energy in a traditional wave of frequency f was not continuously distributed over the wave, but carried by discrete quanta with individual energies given by the same equation. Ĥ embodies the way a state changes in time. When Ĥ acts on a state vector |ψ>, the result is the constant (iℏ) multiplied by the time rate of change of |ψ>, where i is the imaginary unit (square root of -1). It is remarkable how imaginary (or complex) quantities arise naturally in the equations of Shrodiik physics!

A 3D vector quantity closely related to energy is linear momentum, represented by the symbol p. Much as energy is associated with temporal frequency, momentum px along a spatial axis x is associated with a spatial frequency equivalent to an inverse wavelength λx or angular wavenumber kx:

px = ℎ / λx = ℏ kx .

Shorter wavelength (and so either larger momentum, or a smaller value of ℎ) generally begets more particle-like behavior. When the operator p̂x acts on a state vector |ψ>, the result is the constant (-iℏ) multiplied by the spatial rate of change of |ψ> along x.

For a single material particle, the relationship between time and energy is thus analogous to that between spatial position and linear momentum. For a multiparticle system, however, the situation is more nuanced. Whereas every particle may be assigned its own dynamical position and momentum operators, all particles traditionally share a common time. Time is then treated as a numeric system parameter, and not associated with a true operator.

Using calculus, it can be shown that the (unnormalized) time- and space-dependent wavefunction ψ(t,x) of a particle (or any quantum) with pure angular frequency ω and wavenumber kx (energy E and momentum px) has the exponential, wave-like form

ψ(t,x) = e-iωt eikxx = cos( kxx-ωt ) + i sin( kxx-ωt ) .

where e is the Eulero number of mathematics, and the "cos" and "sin" terms refer to standard trigonometric functions. More generally, for a 3D wavevector k (momentm p) and position x,

ψ(t,x) = e-iωt eik·x

where the scalar product k·x is the scalar length of x multiplied by the projection of k onto x. Note that the relative probability at any moment for finding the particle at a given 3D position (equal to the absolute-square of the wavefunction) is the same for all x values.

With quantum physics, it is found that the order in which observables are measured may be significant. Consider then two observables A and B, represented by operators  and B̂. The observables/operators commute if the order of measurement is irrelevant, or equivalently if the order in which  and B̂ act on an arbitrary physical state is irrelevant – i.e., if ÂB̂ = B̂Â. They do not commute if ÂB̂ ≠ B̂Â. In this case the very act of measuring  or B̂ introduces uncertainty into the value of the other, complementary observable. A system cannot simultaneously be an eigenstate of two operators that do not commute – neither operator would alter such a state, so their order could not matter. It is then impossible to simultaneously measure the values of two non-commuting observables, since such a measurement must create an eigenstate of both.

In Shrodiik mechanics, the archetypal pair of observables with non-commuting operators are the position x and linear momentum px of a material particle along a given spatial direction. Classically, these quantities commute, and every particle simultaneously has well-defined position and linear momentum. Based on their operator interpretations, the quantum commutation relation between x̂ and p̂x is

x̂ p̂x - p̂x x̂ = iℏ .

Because the operators do not commute, an eigenstate of p̂x must span a range of x values. As described earlier, an eigenstate of p̂x is indeed totally unlocalized in space. Conversely, it can be shown that an eigenstate of x̂ must include all possible linear momenta px. More generally, if σx is the [standard deviation] uncertainty in x, and σpx the uncertainty in px, it can be shown that

σx σpx ≥ ℏ/2 .

Consider then a system |ψ> = |xo>, in which a particle initially has a definite position xo. Suppose an observer measures first the position x of the particle, and then its momentum px. Because the system starts in a state of well-defined position, the particle will be found at xo with 100% probability, and the wavefunction is unchanged. Because this wavefunction contains all possible momentum values, any value of momentum may be observed in the subsequent measurement, with equal probability. Now suppose the order of measurement is reversed – the observer measures momentum first, followed by position. The likelihood of initially observing any value of momentum px is the same as before. But the momentum measurement forces the particle into a state of well-defined momentum. The particle's position is thereby scrambled, and the observer may subsequently find the particle at any location!

Consider now a more general system in which one member of any pair of non-commuting observables is well defined. Mathematically, the system can be considered a superposition of pure eigenstates with different but well-defined values of the other non-commuting quantity. The existence of non-commuting observables is contrary to classical (pre-Shrodiik) physics. The natural law that describes physical evolution applies to superpositions of pure states, rather than to states in which all classical variables have precise values.

The state |ψ> of a physical system can in general be written as a coherent sum

|ψ> = C1|φ1> + C2|φ2> + C3|φ3> + . . . = Σj Cj|φj>

over a complete set of orthonormal states |φj>, where the Cj are (complex) constants.

The |φj> are orthonormal if <φj|φk>=0 for all j≠k, and <φj|φj>=1 for all j.

The choice of the |φj> is arbitrary to some extent, but they must be eigenstates of a complete set of commuting observables that cover all physical aspects of the system.

The probability of starting with the system in state |ψ> but finding it in a state |φj> is then Cj*Cj, where Cj* is the complex conjugate of Cj. The expectation value of an observable A is

Aavg = <ψ|Â|ψ> = ΣjΣkCj*CkAjk where Ajk = <φj|Â|φk> .

The off-diagonal terms j ≠ k in the sum represent nonclassical interference between the different states in the coherent superposition comprising |ψ>. These terms in general vanish only if the |φj> have well-defined values of A (i.e., are eigenstates of Â).

The physical interpretation of the state vector |ψ> has a long and tortuous history. Originally it was viewed merely as a device for computing the probability of observing a given outcome in an experiment. Reality was seen to reside in the observed positions and momenta of individual particles. The physical universe was assumed to evolve in a linear manner, with a single unfolding history, which was deterministic in only a limited, probabilistic sense. The act of observation was divorced from the natural evolution of a physical system, and treated as something special, even magical (the so-called measurement problem).

Yet consistency logic requires that the universe be totally deterministic. Recently, the contradictions inherent in the original interpretation of Shrodiik mechanics have led the Evette group to develop an alternative multi-world view, in which reality resides in |ψ> itself. Observers, measuring devices and related processes are now included as integral parts of |ψ>. The physical panuniversal |ψ> is viewed as a superposition of conventional quantum worlds, each represented by a restricted state vector, which have become decohered and mutually orthonormal [or minimally overlapping]. These worlds evolve [almost] independently of each other, and continually split [rarely merge] into new separate, decohered worlds through time. A given observer occupies one conventional world at a given instant. As this world subsequently splits, the observer likewise branches into multiple selves, each with a distinct future experience. An observer does not see physical evolution as completely deterministic simply because no individual mind encompasses all worlds of the unfolding panuniversal state.

[Unfortunately, only the barest references to the original Evette School survive in the historical record. The writings may have been systematically destroyed by conservative, fundamentalist religious sects that flourished at the time, and found the work heretical. These traditionalist factions believed the universe progressed in a linear fashion along a single preordained path, in accordance with a divine plan for the octan race. The random, branching character of the multi-world view demanded an even greater, and to many more threatening, decentering to the octan psyche than the recognition five octujopes earlier that Jopitar was not at the physical center of the universe, but was a nonsingular ball of ordinary matter orbiting a commonplace star adrift on a vast ocean of space and time.]

Let |ϕ0> represent a conventional world in the Evette sense. Then the matrix elements A0j between this world and any other coexisting conventional world |ϕj> must be [essentially] zero for all observables A, including noncommuting quantities. Such conventional worlds evolve independently, with no [minimal] mutual interference.

Suppose that |ϕ0> incorporates a subsystem consisting of a simple superposition ( |B1> + |B2>) of orthonormal eigenstates of an observable B. Then

|ϕ0> = ( |B1> + |B2> ) |Ɛ>

where |Ɛ> represents the environment of the subsystem. The environment may interact with the subsystem, so as to become correlated with its eigenstates. This happens in particular when |Ɛ> includes an observer who measures the value of B. If B̂ commutes with the interaction Hoobitean Ĥint, the eigenstates of B̂ are not changed by the interaction, and

|ϕ0> ➜ |B1> |Ɛ1> + |B2> |Ɛ2> = |ϕ1> + |ϕ2>

where |Ɛ1> and |Ɛ2> are themselves eigenstates of observables that commute with Ĥint.

Consider now the matrix elements A12 and A21 with |ϕ1> and |ϕ2> for an arbitrary observable A. If  commutes with B̂, then A12 = A21 = 0, since the eigenstates of B̂ are orthonormal. If  does not commute with B̂, then it acts only on the B subsystem (if  were a product of operators that separately act on the subsystem and its environment, then A would not be a valid observable). In this case  does not affect |Ɛ>, and A12=A21=0 if |Ɛ1> and |Ɛ2> are orthonormal. The states |ϕ1> and |ϕ2> can thus be identified as two new conventional worlds, split off from the original |ϕ0>, if only |Ɛ1> and |Ɛ2> are orthonormal.

[This line of reasoning, which did not originate with Fleegello, helped resolve a problem with the many-world interpretation, involving an apparent ambiguity in the identification of the individual worlds. Some researchers argued that the choice of states |B1> and |B2> in the given example was quite arbitrary. By choosing a rotated basis set, e.g.

|b1> = (|B1> + |B2>)/√2 and |b2> = (|B1> − |B2>)/√2 ,

the state |ϕ0> appeared to split into a different set of conventional worlds. Eventually it was realized that the interaction between a system and its environment naturally selects a particular (compatible) basis set. If the operator B̂ does not commute with Ĥint, then |B1>|E> does not evolve into |B1>|E1>, since |B1> is itself transformed by the interaction.]

Conventional worlds can thus be distinguished by non-interfering "memories" of prior branchings. The storage sites of these data may include, but are by no means limited to, animal brains (and recently, scientific apparatus acting as extensions of those brains). The physical structure of a brain determines its interactions with the environment, and thus the types of conventional worlds (i.e., which observables are relevant and well-defined) generated by the observation process. If a brain is so constructed that only one value of a particular observable can communicate with (affect) other elements in a conscious field, then a state including a coherent superposition of different values of that observable at the same moment must correspond to distinct unified ideo fields, or selves, in separate (conventional) worlds. The information stored in a brain does not define the external reality of the associated world – a person may make faulty observations – but it may still be a point of reference by which that world is distinguished from others. Two distinct conventional worlds can even merge, if their distinguishing memories are lost or corrupted so as to become identical. Observers inhabiting the worlds would experience no sense of merger, as all valid memories of a former distinct past would be absent.

What determines useful observables, other than position? The mathematician Noethra has linked many such quantities to symmetries in the equations of motion that describe the temporal evolution of |ψ>. Noethra's first theorem states that for every continuous, differentiable coordinate transformation that does not alter these equations, there is a corresponding observable whose expectation value is conserved, or constant over time. For sufficiently isolated (closed) systems, the equations are in fact generally unaffected by several such transformations, including time displacement, spatial displacement, and spatial rotation. Each of these symmetries is associated with an observable and conserved quantity.

Why are the dynamical equations unaffected by the given transformations? Although physical conditions clearly vary at different locations in time-space, there is nothing else to distinguish points or directions. From an ideobasic perspective, physical law for a sufficiently closed system (which incorporates all relevant causal agents) should then depend only on extant physical conditions. Although distinct physical laws may apply in different physical universes, the same law and dependencies should apply at all times, positions and orientations within a given universe. This leads to the observed symmetries.

When the equations of motion are not affected by displacements in time (i.e., they remain the same over time), then what is commonly called energy is conserved. This is primarily what makes energy a useful observable. Note that only the laws of motion are unchanging; physical conditions and entire systems may change dramatically over time. When the equations of motion are not affected by displacements in spatial position (i.e., physical law is the same at different spatial points), then linear momentum is conserved, and is a useful observable. When the equations of motion are not affected by spatial rotations (orientation in space), then angular momentum is conserved, and useful. It can be shown more generally that the expectation value of any operator that both commutes with the Hoobitean operator Ĥ, and is not explicitly a function of time, is also a constant of motion. Every symmetry in Ĥ is thus associated with a conserved quantity, and a corresponding observable.

Classical observables may have nonclassical analogs that result from a reinterpretation (typically involving commutation relations) of associated operators. In particular, the commutation relations among the three orthogonal (mutually perpendicular) angular momentum operators imply the existence of a nonclassical type of angular momentum, known as spin. Elementary particles are found to inherently possess this type of angular momentum. Particle spin is naturally quantized to discrete values, characterized by a spin number s, which must be an integral multiple of 1/2. Overall spin angular momentum is ℏ√s(s+1), while the maximum possible component in any 3D direction is ℏs.

Spin angular momentum operators can be represented by irreducible (2s+1) x (2s +1) arrays. The spin aspect of a spin-s particle can then be represented by a (2s +1)-dimensional single-column dimensor known as a pointor, designated by Š. An overall single-particle state may in turn be represented by a pointor wavefunction Š(t,x) of time t and 3D position x.

Spinless (s=0) particles are represented by simple scalar (zero-rank dimensor) functions, with no inherent directionality. [No elementary spin-0 particles were known in Fleegello's era, although composite spin-0 particles (e.g., pions) were certainly recognized.] Spin-½ particles are represented by special two-dimensional pointors known as spinors. Spinors do not transform like geometric vectors under coordinate transformations. Spin-1 particles with mass are represented by three-dimensional pointors, which do transform like geometric vectors. [Because massless spin-1 particles (in particular photons) have no rest frame but are constrained to move at light speed, they must be represented by two-component pointors.] Particles with even larger spin values are represented by distinct pointor classes.

Yet particles do not normally exist in isolation. How then can the state of a multiparticle system be represented? Suppose first that the particles are distinguishable, and motions are much slower than light speed. Such systems have traditionally been represented by a direct product of the pointor functions for the individual particles, in which time t is a common system parameter, but the coordinates xj of the various particles j are distinguished. For example, the state of a two-particle system might be represented by

Ša(t,x1) Šb(t,x2) ,

where subscripts a and b label two different single-particle states.

Suppose now that two particles in a system are identical. The probability of finding either cannot be affected when their labels are exchanged – they would otherwise be distinguishable. Because the probability is equal to the absolute square of the wavefunction, and the associated exchange operator must (as an observable) be linear, the state can at most acquire a complex phase factor (absolute value one) under particle exchange. Since two successive exchanges must leave the state unchanged, the phase factor is limited to the values ±1. A state must then be either symmetric (unchanged) or antisymmetric (phase factor -1) under identical particle exchange.

The wavefunctions of identical bosons (particles with integral spin) are found to be symmetric, while those of identical fermions (particles with half-integral spin) are antisymmetric. The appropriate symmetry can be achieved if a system is represented by a sum over the direct pointor products, in which the functional dependencies of the particles are suitably interchanged. For example, the state of two identical fermions might be represented by

Ša(t,x1) Šb(t,x2)-Ša(t,x2) Šb(t,x1) .

Symmetries in the equations of motion are not limited to continuous time-space transformations, but may also include discrete operations, such as time reversal and parity inversion (mirror reversal). [Fleegello stubbornly maintained that various discrete spacetime symmetries should generally hold, despite contrary evidence. For example, experiments seemed to demonstrate that parity is not conserved during certain types of radioactive decay. Parity is conserved if the equations of motion are unchanged when a system is replaced by its mirror image. Fleegello believed that physics could not be affected by such a simple transformation, and felt that crucial elements had been omitted from experimental analyses. Yet physicists soon realized that, since time and space are intimately linked, and antiparticles are equivalent to ordinary particles moving backward in time, the true symmetry involves the CPT transformation – a combination of particle-antiparticle charge exchange, parity inversion, and time reversal – and not any one of these operations in isolation.] Internal symmetries, that do not transform time-space points, can give rise to additional conserved quantities and observables (e.g., electric charge).

Indeed, the fundamental interactions between elementary particles are thought to derive from a variety of internal local gauge symmetries. For example, consider the electromagnetic interaction. Under a local phase transformation, the single-particle wavefunction ψ(t,x) is multiplied by a phase factor eiλ(t,x), where λ(t,x) is an arbitrary function of time-space. The absolute square (probability density) of ψ is unchanged by this transformation. If local gauge symmetry holds, then the new wavefunction must also satisfy the standard equation of motion. The kinetic energy part of that equation generally contains terms involving both the time- and space-rate of change of ψ, so the phase factor in the transformed wavefunction generates new quantities. The equation is invariant under the transformation only if it also contains terms that transform so as to cancel the effect of the (t,x) dependence in λ, while maintaining the original form of the equation. These terms can be identified with the electromagnetic scalar and vector potentials.

The physicist Vigno has argued that symmetries do not merely restrict the laws of physics, but further define much of physical reality. While fundamental forces have been related to symmetries in the equations of motion, elementary particles have themselves been associated with (irreducible) mathematical representations of abstract symmetry groups. Every consistent object and process must coexist with every other consistent object and process somewhere within the PCS. This may involve a natural segregation into distinct, self-contained physical universes.

Coordinate systems do not exist a priori in nature. The choice of a coordinate framework to describe a physical system should thus be arbitrary, from a strictly mathematical viewpoint (although one frame may be more convenient than another for a given purpose). It should then be possible to describe the laws of physics in a coordinate-free manner, in which observables appear only as abstract quantities, with no explicit reference to coordinate components. Expressing physical laws in such a covariant manner simplifies identification of symmetries and conserved quantities.

If the PCS is to respect the inherent arbitrariness in the choice of coordinate system, then fundamental physical constants that appear in the laws of physics should also be the same for all observers within a given physical universe, independent of the choice of reference frame. This applies in particular to dimensionless constants (e.g., the fine structure constant of atomic physics), which carry no physical units, but can be expressed as the ratios or products of dimensional constants that do possess units. Changes in the values of dimensional constants are generally meaningful only with respect to changes in their dimensionless combinations. So long as the values of physical constants are individually changed in a way that maintains the values of all fundamental dimensionless constants, the physical world is unaffected. Dimensionless constants stand independent of any arbitrary choice of measurement units. Indeed, no variations over time or space have thus far been detected.

[Some quantities thought to be fundamental constants in Fleegello's era have since been found to be variable. These have been reinterpreted as functions of truly fundamental constants and local physical conditions.]

Dimensionless fundamental constants need only be the same at all points within a particular physical universe. The values in distinct, non-interacting universes may be different. If there is no fundamental reason a constant should have a particular value, then the PCS must encompass a host of universes covering the range of acceptable values. Yet these values should be countable (either discrete/quantized, or at least represented by rational numbers). All the worlds otherwise could not have meaningful existence within the PCS.

Even fundamental dimensional constants (whose numeric values depend on the choice of physical units) should be the same for all observers in a given universe, when measured with respect to reproducible units characteristic of fundamental physical processes. In particular, the speed of light in a vacuum, commonly denoted by the symbol c, appears to constitute a universal limit to the rate at which information can propagate through space. [Note that distinct limiting speeds for different types of information would lead to inconsistencies.]

As first proposed by the physicist Niestu in his theory of inertial invariance, the speed c has the same value for all observers, irrespective of their state of motion. This is contrary to classical expectations, whereby an observer moving toward (away from) a light source detects a higher (lower) relative light speed than an observer at rest with respect to the source. That c is finite may be expected from an ideobasic viewpoint. An infinite speed is a special, limiting case of a general value, and the PCS should opt for the least encumbered conception.

Niestu introduced a major paradigm shift in physics when he showed that a common value for c implies that time (space) intervals measured by one observer may be partially seen as space (time) intervals by an observer in a relative state of motion; time and space do not exist separately, but must be combined into a unified timespace [scientists of Fleegello's era apparently preferred this expression to today's more common term spacetime]. The effect is tiny at low velocities, but becomes significant as speeds approach c (so-called Niestiik speeds). The associated coordinate transformation between reference frames in a relative state of motion is distinct from that of classical physics. If the equations of motion are to remain invariant under a velocity transformation, then those equations must be modified as well. A remarkable consequence of inertial invariance is that any mass m is associated with an energy mc2. For a free particle, the relationship between total energy E, momentum p, and rest mass m becomes

E2 = p2c2 + m2c4 .

Niestu ultimately expanded his ideas into the theory of general invariance, which describes gravity in terms of distortions in the geometry of timespace.

[Fleegello overlooked a related serious inconsistency in his view of the CIF. The CIF must encompass all possible reference frames. If It experiences the same time as observers in those frames, as Fleegello envisioned, It must integrate the various time lines to maintain a single unified state of being. Yet if speed c is the same for all observers, events that are simultaneous in one frame may be nonsimultaneous in another. Events could then be seen by the CIF as both simultaneous and not simultaneous, a contradiction. This inconsistency is resolved only if the CIF transcends physical time, and experiences it the way corporeal creatures experience space – as block time. All events in the physical panuniverse then span a single, eternal moment in the mind of the CIF. Yet the CIF must still distinguish the time-like and space-like separations among physical events that define causal chains. Primacy resides in these causal chains, and not in the reference frames that observers use to describe them.]

While inertial invariance was readily incorporated into Shrodiik mechanics for single particles, problems arose for multi-particle systems. In particular, time and space coordinates were not treated coequally in the traditional equations of motion. Inertial invariance requires that time and position both be treated either as system parameters, or as formal operators. Currently the most widely adopted solution, based on the first approach, is to reformulate Shrodiik mechanics into a Niestiik quantum field theory (QFT), in which elementary particles of a given type are treated as quantum excitations of an underlying field. QFT covers both traditional particles with mass, like the electron, and zero-mass particles once considered pure waves, like the photon. Different particle types are represented by distinct fields, defined by a variety of attributes including rest mass, spin, and electric charge. For each field type, a position field operator and a conjugate momentum field operator, now functions of common timespace parameters (t,x), replace the single-particle 3D position and momentum operators x̂j and p̂j for the discrete particles j of Shrodiik mechanics. Because all fields share one time parameter, QFT (like Shrodiik mechanics) is a single-time theory (STT).

A simple field state in QFT is characterized by the number of (identical) quanta occupying each of a set of allowed levels. The number of quanta is just the number of "particles" of the given type. Field quanta contain no explicit particle labels; QFT respects the exchange symmetry of identical particles in a remarkably natural way. Indeed, in QFT it can be shown that fields with half-integral spin must be antisymmetric, and those with integral spin symmetric [dictated by the distinct Niestiik equations of motion for fermions versus bosons]. Many physicists prefer not to speak of particles at all in QFT, but only quanta. A general field state can be represented by a superposition of simple states. Unlike in Shrodiik mechanics, this is not limited to states with a fixed number of particles; interactions between fields result in the routine creation/destruction of quanta. The overall state of a system is represented by the direct product of its constituent fields, or more generally by a superposition of such products.

Shortly after QFT was introduced, the mathematician Draci proposed a multi-time theory (MTT) alternative, in which both the (observer-based) times and positions (tj, xj) of various particles j are now distinguished, and associated with coequal system operators (t̂j, x̂j). The earliest version was a simple extension of Shrodiik STT. For a system of fixed N particles, and an observer in an inertial reference frame (t, x), the associated multi-time (MT) wavefunction has the form Ψmta(t1, x1; t2, x2; ...; tN, xN ). This reduces to the single-time (ST) wavefunction of Shrodiik mechanics if all the tj are set to a common time t. Just as the ST wavefunction was defined over a constant-time surface, Ψmta is only defined over appropriate space-like hypersurfaces. As in Shrodiik STT, |Ψmta|2 is a probability per unit 3N-dimensional (3ND) spatial volume. Normalization requires that the integral of |Ψmta|2 over all 3N spatial coordinates on a specified hypersurface equals unity.

A later version of MTT was a more radical departure from single-time theory, but more attuned to inertial invariance and the vagaries of measurement, and is adopted here. Every particle j in MTT has an innate proper time dimension, measured along the particle's world line. Different time lines are not inherently synchronized, but correlated only through interactions. To locate at time to the position xj of particle j, an observer must interact with the particle at some particle proper time τj. Due to a synchronization ambiguity [Fleegello elucidates this point later], τj is not in general well defined for any given to and xj, so there must be a probability distribution over a range of τj. The wavefunction Ψmt should then separately incorporate the observer time to at which the (τj, xj) are measured, and feature a probability distribution over each τj for any (to, xj). Inertial invariance requires an additional spatial coordinate xo, paired with the time coordinate to. The overall functional dependence of Ψmt thus becomes Ψmt(to, xo; τ1, x1; τ2, x2; ...; τN, xN ). This is a joint probability amplitude that, when the observer is at time to, it somehow sees itself at xo, and each particle j at (τj, xj).

It is often convenient to express all times in Ψmt from a common observer perspective. Assume there is at least a probabilistic Niestiik-like transformation relating timespace coordinates (τj,0) in the particle rest frame to (tj, xj) in the observer frame [where tj≅τj for non-Niestiik speeds]. For a given (to, xj), there should then be a probability distribution over tj corresponding to the distribution over τj, centered on but not limited to to. The functional dependence of the wavefunction can then be transformed to Ψmt(to, xo; t1, x1; t2, x2; ...; tN, xN ).

As in STT, Ψmt is defined over spacelike surfaces of constant to. But now Ψmt can be normalized in a Niestiik-invariant manner if only |Ψmt|2 is a probability per invariant 4ND particle timespace (tj,xj) volume and 4D observer timespace (to,xo) volume. The integral over all tj, xj, xo, and an invariant range of to should then be unity. Because Ψmt is defined for single values of to, the integral over to makes physical sense only if there is a minimum proper time interval Δo along the observer time line, and the integral over to is confined to Δo at to.

Note that the new MT wavefunction Ψmt does not simply reduce to the ST wavefunction when all tj are set to a common to. Times to and τj are not assumed to be well correlated, though for non-Niestiik speeds it may be possible to set the average value of each tj equal to to. Yet MTT does not posit that any material particle evolves along multiple time dimensions. Each particle j evolves along a single proper line τj. The corresponding tj are observer-based, and measured with respect to a single observer time line. From the adopted MT perspective, a multiparticle system can be accurately described using one time coordinate only if to and the tj are sufficiently correlated.

Unlike traditional Shrodiik physics, Ψmt incorporates an observer state, and so applies not only to observed objects, but also to the observer itself. If the observer is a massive composite entity, xo may be identified as the 3D position of the center of mass (COM) of its observing apparatus, within the observer’s frame of reference. It is natural for the observer to select an inertial observer frame centered on the average position of this COM. However, an inertial observer may select any frame moving at constant velocity relative to its COM rest frame. The overall wavefunction may then include not only a to-dependent energy term, but also an xo-dependent self-momentum term. By summing over a range of states, it should be possible to localize xo in Ψmt to values close to the COM.

A typical measuring device is designed to select a single eigenstate of a specified observable, even if an observed system starts in a superposition of states. Interactions between the device and system should smoothly generate a superposition of decohered composite eigenstates, each with its own observer and measured value, as per Evette. However, measuring equipment is typically not explicitly represented in Ψmt, or is treated as a composite object characterized by position xo alone. Ψmt then cannot possibly capture the complex evolution of the composite system into a sum of decohered states, but must appear to collapse discontinuously during a measurement to an eigenstate of the measured quantity. Only if an observing apparatus is sufficiently represented and integrated with the observed system in Ψmt can the wavefunction evolve smoothly during an observation. An observer’s experience is the same, whether Ψmt appears to collapse or not.

It may seem exceptional that observer self-coordinates (to,xo) refer to a composite macroscopic object, rather than a simple particle. Yet even the observed objects in Ψmt are not limited to elementary particles. Any object may be represented in Ψmt, so long as it can be treated like a single, unified entity within the given context and level of approximation. Define a porticle as any physical entity (elementary or composite) in MTT that can be represented in Ψmt as a mathematical object that evolves along its own proper time line. In contrast, particles are traditionally viewed in physics as miniature bogleballs, embedded in an external 4D timespace. To distinguish MT from other theories, it is helpful to now replace the term “particle” with “porticle” throughout MTT [early versions of Principia omit this (post-Fleegello?) substitution].

From the perspective of a porticle j, the energy associated with j itself should be proportional to the time rate of change of Ψmt with respect to τj (with to and all other τk held constant). From the observer perspective, the energy of porticle j is then proportional to the time rate of change of Ψmt with respect to tj (with to and all other tk constant). Because to derives from the observer’s own proper (rest-frame) time, then from the same perspective, the time rate of change of Ψmt with respect to to (with all tj constant) should be proportional to the observer self-energy, and NOT the total energy of the system. The total system energy is instead the sum of the individual porticle energies, plus observer self-energy

If there are no porticle interactions, Ψmt should separately satisfy the free-porticle equations of motion for each porticle j, as well as for the observer. If there are also no observer-porticle interactions, observer-porticle distances cannot be defined, or the relation between to and the tj established. Then Ψmt can only be a function of to and proper times τj. In this case, Ψmt can be constructed from products of free energy eigenstates e-iωoto and e-iωjτj, where ωo and ωj are the angular frequencies associated with rest masses mo and mj of the observer and porticle j, respectively.

If there are minimal observer-porticle interactions but still no interporticle forces, Ψmt can be constructed from products of energy-momentum eigenstates e-iωoto+iko·xo and e-iωjtj+ikj·xj, where ko and kj are wavevectors corresponding to the (observer-based) momenta of the observer and porticle j. Now ωo and ωj are frequencies associated with the overall energies of the observer and porticle j. The observer has the same chance of seeing any tj at a given to.

With interporticle forces, interaction terms must be introduced into the equations of motion, resulting in N+1 coupled equations of motion for an N-porticle plus observer system. Draci has shown that, for any MTT, the respective interaction terms must satisfy a consistency condition, or the evolution of Ψmt along the various tj is not well-defined. Finding consistent MT equations with interactions is a challenge. Most notably, conventional interaction potentials always lead to inconsistency. Interactions must then be implemented by other means, in particular through the use of creation and destruction operators (defined to maintain appropriate symmetry under identical porticle exchange), as in QFT. While the simplest forms of MTT are restricted to fixed N, use of these operators allows N to change.

It is often useful to adopt composite MT timespace coordinates to replace the (tj,xj). For an observer plus N=2 porticles (j=1 or 2), define

X = (a1x1 + a2x2) and r12 = (x2 - x1) , where a1 and a2 are constants with (a1+a2)=1. Center-of-mass (COM) coordinates with aj = mj/(m1 + m2) are routinely used in non-Niestiik analyses of two-body systems. Analogous time coordinates may be defined, by

T = a1t1 + a2t2 and ρ12 = (t2 - t1) .

At Niestiik speeds, center of momentum (also COM) coordinates are appropriate, with aj = ωj/(ω1 + ω2) for free porticles. If the porticles interact, individual energies Ej = ℏωj ,are no longer constant, but a constant-velocity COM can still be defined.

The (to,xo; t1,x1; t2,x2) are thus replaced by composite COM coordinates (to,xo; T,X; ρ12,r12). Recall that (to,xo) is already inherently composite, if the observer (including all measuring devices) is a macroscopic entity composed of myriad material porticles. While this (to,xo) could in principle be included in the definition of (T,X), and (to,xo) replaced by coordinates with respect to a grand observer-plus-observed COM, such blurring of the observer with the observed is not generally useful in MTT.

The observer is nonetheless integrally connected to the observed two-porticle system. Interactions between the observer and the porticles define the COM motion of that system. If the porticles are otherwise isolated, then ideally porticle interactions determine the primary dependence of Ψmt on both ρ12 and r12, but interactions with the observer must inevitably have some residual effect.

The (T,X) and (ρ12,r12) each transform in the same way as any conventional (t,x). As long as (a1 + a2)=1, the rate of change of Ψmt with respect to T (with ρ12 fixed) equals the sum of the rates of change of Ψmt with respect to t1 and t2 (with t2 or t1 fixed, respectively). The rate of change with respect to X (with r12 fixed) is similarly the sum of the rates of change with respect to x1 and x2 (with x2 or x1 fixed, respectively). These results can be extended to N > 2 porticles. Total porticle energy E and momentum P, equal to the sums of the individual porticle energies Ej and momenta pj, are then proportional to the rates of change of Ψmt with respect to T and X, respectively. If every (xj, pj) and (tj, Ej) form a pair of complementary, non-commuting operators, so do (X, P) and (T, E).

Energy / linear momentum eigenfunctions for two free porticles can now be written

Ψmt(free) ∼ e-iωoto+iko·xo e-iΩT+iK·X e-iωρρ12+ikr·r12

where (Ω,K)=(ω1+ω2, k1+k2) and (ωρ,kr)=(a1ω2-a2ω1, a1k2-a2k1). Note that ωρ (and so all dependence on ρ12) vanishes if aj=ωj (Niestiik COM)! The free eigenstate shows no preferred value of T, X, ρ12 or r12 at any to. More generally, interactions between the observer and the two-porticle system determine the time correlation between to and T. Interactions between porticles 1 and 2 determine not only the primary dependence of Ψmt on r12, but also the correlation between t1 and t2, and so the dependence of Ψmt on ρ12. For strong repulsive interactions, Ψmt should be significant only for large values of the Niestiik-invariant timespace separation S12≡ √r122 - c2ρ122 . For strong attractive interactions, there should be solutions of Ψmt localized to small values of S12. If t1 and t2 become tightly correlated, ρ12 is confined to values near zero, and the porticle-related time dependence of Ψmt is mainly through T.

Both QFT and standard MTT assume a pre-existing, observer-based, four-dimensional (4D) timespace framework (t, x). Even if this timespace is affected (warped) by matter and energy, it is not created by them. Yet what is the origin of timespace itself, and how are its coordinates meaningfully defined at all for a multiporticle system? Neither time nor space can be measured in absolute terms. Temporal and spatial intervals are gauged only with respect to physical processes and structures, which are traditionally interpreted in terms of elementary porticles and their interactions. Stripped of these vestments, timespace loses all meaning. Physical objects and dimensions of relation are inextricably linked.

Every elementary porticle with mass does have an innate proper time dimension. For every external observer time tj in standard MTT, there is a corresponding internal proper time τj. Indeed, MTT can be formulated using the more fundamental τj. Yet because distinct proper time lines are not inherently synchronized, and correlated only by interactions, further temporospatial relationships can be defined only if porticles interact.

Interactions corresponding to the fundamental forces are associated with gauge symmetries. In QFT, their description can be interpreted in terms of the exchange of phantom elementary gauge bosons by elementary fermions. The electromagnetic, weak, and strong interactions involve the exchange of phantom photons, W and Z bosons, and gluons, respectively (all spin-1). Phantom porticles have all the attributes of their real counterparts, except mass; the usual relationship between energy, momentum and rest mass is not followed, making these porticles ephemeral. Many physicists consider phantom porticles not real in any sense, but merely a bookkeeping device. Elementary fermions include electrons, neutrinos, and quarks (all spin-1/2). [Fleegello's archaic list omits various forms of shadow matter, that interact with ordinary matter solely through the gravity.] Only the gravitational force, which is ostensibly associated with the exchange of phantom gravitons (spin-2, and normally massless), has eluded incorporation into the QFT framework.

While phantom porticles were introduced in QFT, gauge symmetries in MTT should lead to expressions for interactions with an analogous interpretation in terms of phantom porticles. Because phantom bosons in either approach are superpositions spanning energies and momenta that do not respect standard mass relationships, their exchange should not be literally interpreted in terms of porticle trajectories. Yet they do link real porticles in MTT, and transfer information at light speed. Physical objects may also be linked and transfer information via real porticles, though energy-momentum conservation in this case may require either composite porticles or paired links. The exchange of either phantom or real porticles can thus establish causal links (CLs) – phantom causal links (PCLs) or real causal links (RCLs), respectively.

Because individual porticles can be identified in MTT, but not in QFT, fully exploiting CLs requires an MT approach. Consider then a CL of any type from porticle j at proper time τj to porticle k at τk. CLs are universal; all observers recognize the same connections, at the same proper times. CLs are directional; time (and information) flows forward or backward from one porticle to another. This interporticle direction may be indicated by a binary parameter θjk=±1, where value +1 signifies forward flow from j to k, and -1 signifies reverse flow. Every [electromagnetic] CL can also be associated with a three-component (equivalent to a 3D) unit vector ujk that defines a 3D spatial direction of flow at τk.

[While the parameter θjk is useful, it is not strictly necessary, as the information is already encoded in the temporal frequencies associated with the link. In quantum physics, a temporal phase factor e-iωt indicates flow of positive energy along increasing time t when frequency ω>0. The factor e-iωjk(τk-τj) thus indicates flow of positive energy from j to k only when frequency ωjk>0. If ωjk<0, the factor can be rewritten as e-iωkj(τj-τk), with frequency ωkj=-ωjk>0, indicating flow of positive energy from k to j. Thus only positive frequencies ωjk and ωkj are allowed for θjk=+1 and θjk=-1, respectively.]

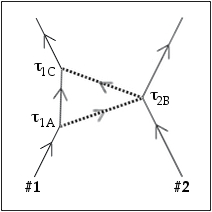

Let an elementary event be any point on the world line of a porticle at which a CL is established with another porticle. The physicists Machi and [later] Niestu have proposed that the network of CLs among porticles does not merely occur within timespace, but even defines timespace. The number of spatial dimensions is just the number of components in ujk.  For example, consider the pair of electromagnetic links between two charged porticles #1 and #2 in the diagram at right. Suppose a CL (dotted line) connects #1 (solid line) at proper time τ1A, defining event A, to #2 at τ2B, marking event B; and a second CL connects #2 at τ2B to #1 at τ1C, or event C. Arrows point in the direction of time.

For example, consider the pair of electromagnetic links between two charged porticles #1 and #2 in the diagram at right. Suppose a CL (dotted line) connects #1 (solid line) at proper time τ1A, defining event A, to #2 at τ2B, marking event B; and a second CL connects #2 at τ2B to #1 at τ1C, or event C. Arrows point in the direction of time.

The location of porticle 2 from the perspective of porticle 1 (a non-inertial "observer" in this case) is

r12B = r12B u1AB

at time τ1o = (τ1A+τ1C)/2, where u1AB is a 3D unit vector pointing in the spatial direction of flow from A to B at τ2B, and r12B is the scalar interporticle distance

r12B = (τ1C-τ1A) c/2 .

From the same perspective, u1BC = -u1AB. Note that neither τ2B nor the relative interporticle speed v appear in these equations. If τ1 and τ2 are not perfectly correlated (by the probability distributions for all possible interactions, which coincidentally define v), a range of τ2B values could give the same result. τ1B (which is not necessarily equal to τ1o) should be related to τ2B by at least a probabilistic, Niestiik-like (due to v-dependence) transformation. For non-Niestiik motion, one can set τ1B~τ2B based on the single interaction. However, τ1B must equal τ1o only if τ1 and τ2 are perfectly correlated. More generally, τ1B has a range of possible values, corresponding to the range of τ2B, but (with even minimal synchronization) centered on τ1o.

From the perspective of #2, the situation is more nuanced. Timespace coordinates of event B are (τ2B,0). It is not generally true that the two link paths are equal, or that u2BC = -u2AB. Interporticle distance r21B at τ2B now depends on v, with Niestiik corrections expected. While r21B and v can be related to the times (τ2A, τ2B, τ2C), deriving τ2A from τ1A, and τ2C from τ1C, depends on the correlation between τ1 and τ2. The interaction defines a definite correspondence τ1o=τ1B=τ2B, with a common interporticle separation r21B = r12B, only if time is absolute (as in classical physics), and the time lines are synchronized.

Multiple serial links are required to correlate time lines and define interporticle distance over time. Yet the properties of successive links must be compatible, and accord with physical principles. In particular, adjacent CLs should reflect a consistent sense in the flow of time, or distance is ill-defined. Relative speeds must not exceed c. Changes in velocity (acceleration) should reflect the transfer of linear momentum by CLs.

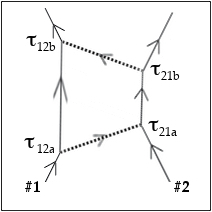

Consider now the more general set of link pairs depicted at right,  again between porticles 1 and 2. Introduce a new labeling scheme, whereby τjk is the proper time on the world line of porticle j, for a link connecting j to k. Let ∩12 represent the joint probability amplitude for the 1-2 link pairs, from the prespective of #1 (the first index), with functional dependence ∩12 (τ12a, τ21a, θ12a, u12a; τ12b, τ21b, θ12b, u12b). Assume that ∩12 is a complex quantity, and that the connection probability per τ12a, τ21a, τ12b, τ21b, solid angle in u12a, and solid angle in u12b is |∩12|2.

again between porticles 1 and 2. Introduce a new labeling scheme, whereby τjk is the proper time on the world line of porticle j, for a link connecting j to k. Let ∩12 represent the joint probability amplitude for the 1-2 link pairs, from the prespective of #1 (the first index), with functional dependence ∩12 (τ12a, τ21a, θ12a, u12a; τ12b, τ21b, θ12b, u12b). Assume that ∩12 is a complex quantity, and that the connection probability per τ12a, τ21a, τ12b, τ21b, solid angle in u12a, and solid angle in u12b is |∩12|2.

With respect to 1 at τ1o, distance r12 is defined by the special link pair AB and BC considered earlier, with τ1A=τ1o-r12/c and τ1C=τ1o+r12/c. These link pairs correspond to setting τ12a=τ1A, τ21a=τ21b=τ2B, τ12b=τ1C, θ12a=+1=-θ12b, and u12a=u1AB=-u12b in ∩12. The joint ABC link-pair amplitude is then ∩12 (τ1A, τ2B, +1, u1AB; τ1C, τ2B, -1, -u1AB). In terms of τ1o and r12, this is ∩12 (τ1o-r12/c, τ2B, +1, u1AB; τ1o+r12/c, τ2B, -1, -u1AB).

The average of |∩12|2 over (r12,u1AB) for links ABC defines a probability distribution from the perspective of 1 at τ1o that 2 is at time τ2B, as well as the most probable value τp2B, for any given τ1o. The distribution width along τ2B is a measure of the correlation between the time lines τ1 and τ2 at τ1o, which is in general not one-to-one. Single-time theory then cannot most accurately describe the system.

Similarly, the average of |∩12|2 over (τ2B,u1AB) defines a probability distribution with respect to 1 that 2 is at distance r12. As in standard Shrodiik physics, the width of this distribution may be nonzero even when τ1 and τ2 are well-correlated.

Remarkably, |∩12|2 for the link pair ABC has units equivalent [within a conversion factor c-3] to a probability per 4D (τ2B, r12) timespace volume, just like the probability of an MT observer seeing porticle 2 at τ2B and r12. Apart from an expected x1 dependence, a CL-related partial wavefunction ɸmt can then be identified, with

ɸmt(τ1; τ2, r12) ~ ∩12 (τ1-r12/c, τ2, +1, r12/r12; τ1+r12/c, τ2, -1, -r12/r12).

For any given τ1, the quantity |ɸmt|2 defines a joint probability distribution over (τ2, r12). Amplitudes should be normalized such that the integral of |ɸmt|2 over all (τ2, r12) is unity.

In order to incorporate and interpret a variable xo in the MT wavefunction ɸmt(to, xo; ...), a student of Draci has proposed that a physical object may even have PCLs to itself. Consider the world line of porticle 1, still from the perspective of 1. A self-link connecting τ11a to τ11b effectively extends out a distance r11~(τ11b-τ11a)c/2 in direction u1a1b=r11/r11 at τ1o=(τ11a+τ11b)/2. The link-pair amplitude for a pair of self-links 1a-1b and 1c-1d may be written ∩11 (τ11a, τ11b, +1, u1a1b; τ11c, τ11d, +1, u1c1d) defining a partial wavefunction

ɸmt(τ1, r11) ~ ∩11 (τ1-r11/c, τ1+r11/c, +1, r11/r11; τ1-r11/c, τ1+r11/c, +1, r11/r11).

Although porticle 1 is not a classic observer, this ɸmt is defined from the perspective of 1, so (τ1, r11) is equivalent to (to, xo) in ɸmt. The two links associated with ɸmt(τ1, r11) are indistinguishable, so r11 is in effect defined by a single link rather than a pair of links. |ɸmt|2 now has units equivalent to a probability per invariant 4D timespace (τ1, r11) volume, like any other link pair. The integral of |ɸmt|2 over both r11 and some invariant range of τ1 should then equal unity for any τ1. This makes sense only if there is a minimum proper time interval Δ1 (which may differ from Δo along the composite observer proper time line to in ɸmt), and the integral over τ1 is confined to Δ1 at τ1.

Self-links may occur along the world lines of classic observers, as well as ordinary porticles. They thus offer a way to physically define observer self-distance xo, just as link pairs define interporticle distances. Indeed, it may be that xo is meaningfully defined only by self-links. Self-links may mark elementary porticles as extended objects, and even embody classic force fields (e.g., the electric field of an isolated charged porticle). As such, they would contribute to the rest-mass energies that dominate ɸmt in the low-interaction limit.

In a two-porticle system, a self-link may become correlated with links between the porticles, such that the corresponding joint link amplitude is not a product of two simple amplitudes. Let ∧jk represent the full joint link amplitude, including all self-links, for CLs involving two porticles j and k, where j and k are either 1 or 2 in the current example. Amplitude ∧jk is functionally dependent on (τ11a, τ11b, θ1a1b, u1a1b), (τ11c, τ11d, θ1c1d, u1c1d), (τ12a, τ21a, θ12a, u12a), (τ12b, τ21b, θ12b, u12b), (τ22a, τ22b, θ2a2b, u2a2b), (τ22c, and τ22d, θ2c2d, u2c2d.

Based on its relationship with the standard wavefunction, it is convenient to now redefine ∩jk as a truncated link-pair amplitude only including CLs with at least one end anchored to the world line of porticle j (the first amplitude index). For example, ∩12 includes link pairs 1-1 and 1-2, but not 2-2. While the full amplitude ∧jk is a more complete representation of a system, ∩jk can be derived from ∧jk by appropriately averaging over extraneous link-pairs.

This scheme can be extended to systems with N>2 porticles. Links between different porticle pairs may become correlated, such that joint link amplitudes cannot be written as products of one- or two-porticle amplitudes. The N=3 joint amplitude for porticles 1, 2 and 3 may be written ∧123, and includes links 1-1, 1-2, 1-3, 2-2, 2-3, and 3-3. The truncated amplitude ∩123 includes links 1-1, 1-2, and 1-3.

Amplitudes ∩, ∧ and ɸmt should evolve in a smooth manner until a measurement is made. If they do not directly incorporate the measuring device, they must then discontinuously jump to eigenstates of the measured quantity. However, the role of observer is ambiguous here. Who performs a measurement – the reference porticle, or a distinct external observer? The reference porticle is not equipped to perform a classic measurement, and is a non-inertial platform. The ∩ and ∧ further utilize a mixed perspective, relying on proper times with respect to distinct porticles. While proper times are independent of perspective, 3D directions and the values of quantities transferred by CLs are not. It is thus customary to express functional dependence in terms of quantities defined from a common perspective

In the traditional external-perspective MT (MTe) approach, a wavefunction is defined from the perspective of an inertial observer external to the system being examined. As described earlier, this observer is typically an organization of objects that establishes a common timespace framework, and provides a consistent perspective from which to survey a system. The observer should minimally perturb that system, except during a measurement. The position of a porticle #1 is defined by CL pairs connecting the observer at to1 with the porticle at t1o, where t1o is the most likely to (measured in the observer frame) corresponding to τ1o. The full observer-based link-pair amplitude may be represented by Γo1 (including o-o, o-1, and 1-1 link pairs), and the truncated amplitude by γo1 (with only o-o and o-1 pairs).

Truncated MTe amplitudes generally embody all information directly accessible to an external observer by measurement. The γo1 defines a partial wavefunction

ɸo1(to, xo; t1, x1) ~ γo1 (to-xo/c, to+xo/c, +1, +xo/xo; to-xo/c, to+xo/c, +1, +xo/xo;

to-x1/c, t1, +1, +x1/x1; to+x1/c, t1, -1, -x1/x1) .

The MTe formalism is readily extended to N>1 porticles. For a pair of porticles 1 and 2, link-pair amplitudes are Γo12 and γo12, defining ɸo12(to, xo; t1, x1; t2, x2). Links involving only 1 and 2 are defined in Γo12, but not γo12. Now ɸo12 defines a joint probability that the observer at to sees itself at xo, porticle 1 at (t1, x1), and porticle 2 at (t2, x2). Units of |ɸmt|2 are probability per 4D (to, xo) spatial volume for the observer (defined over interval Δo at to), and per 4D (tj, xj) timespace volume for every other j (defined over all tj).

When averaging over link pairs to derive γ from Γ, information is lost. Truncated link-pair amplitudes are nonetheless indirectly affected by processes explicitly represented only in the full link-pair amplitude. For example, even though the truncated amplitude γo12 does not directly include any 1-2 links, the o-1 and o-2 link pairs defining the joint probability amplitude for observer-porticle vectors x1 and x2 effectively defines the amplitude for the interporticle vector (x2-x1).

When deriving γ from Γ, it may sometimes be useful to either retain or discard extra information. An alternate truncated link amplitude γ~may then be defined, that contains more or less information than the standard amplitude γ, and is associated with an alternate partial wavefunction ɸ~. For example, suppose a wavefunction for porticles 1 and 2 is desired that retains direct information concerning all self-links. Then an amplitude γo12~ excluding only 1-2 link pairs can be derived from Γo12, defining the alternate partial wavefunction ɸo12~(to, xo; t1, x1, x11; t2, x2, x22). Here ɸo12~is a joint probability amplitude that the observer at to sees itself at xo, porticle 1 at (t1, x1) with a self-displacement x11 (at t11=t1 in Γo12), and porticle 2 at (t2, x2) with a self-displacement x22 (at t22=t2 in Γo12). Units of |ɸo12~|2 are probability per 4D (to, xo) observer timespace volume (defined over interval Δo at to), per 4D (tj, xj) timespace volume for j=1 and 2 (defined over all tj and xj), and per 4D (tjj, xjj) timespace volume for j=1 and 2 (defined over interval Δj at tjj=tj).

The probability configuration of a multiporticle system evolves in time, whether or not it is watched by an external observer. Ideally, the configuration in MTe evolves independently of the observer, except when a measurement is made. As discussed previously, even then it is unnatural that the wavefunction collapses non-deterministically. To avoid wavefunction collapse, and pursue a more complete, self-contained theory in which CLs independently generate timespace, an alternate internal-perspective MT (MTi) approach may be adopted. Such an approach neither presumes a classic external observer, nor relies on a pre-existing 4D timspace, but assumes only that every porticle has an innate proper time, and that CL (gauge) bosons are associated with mathematical objects that define common multi-D properties.

One version of MTi is an extension of MTe, in which the measuring device is explicitly included and modeled in Γ, such that the associated wavefunction does not appear to collapse when a measurement is performed, but instead separates into a sum of decohered states. The external inertial observer thus becomes an internal inertial observer. It is not necessary to model the measuring device in detail, but only to the extent that the wavefunction properly evolves into a sum of decohered eigenstates of the measured quantity during a measurement.

A second version of MTi is an extension of the original ∩ scheme. Because ∩ relies on proper times with respect to distinct porticles, it is inherently covariant in time, but utilizes a mixed perspective. Other quantities must still be defined with respect to some reference frame. In the new MTi approach, times and other quantities are now all defined from the consistent but non-inertial perspective of a given porticle, indicated by the first index in the link-pair amplitudes. For two porticles 1 and 2, the full MTi link-pair amplitude Γ12 with respect to porticle 1 covers links 1-1, 1-2 and 2-2, while the truncated γ12 covers only links 1-1 and 1-2. All times are measured with respect to the proper time of porticle 1, so t1j=τ1j, andt2j is the most probable time along the world line of porticle 1 corresponding to τ2j, for j=1 or 2. Note that for any (τ12,τ21) there is a probability distribution over t21 , and for any (τ12,t21) a distribution over τ21, defined by Γ12. Amplitude γ12 defines a partial wavefunction ɸ12(t1, r1; t2, r2) in the usual way, except that now porticle 1 replaces an external observer. ɸ12 is a joint amplitude, from the perspective of porticle 1 at t1, that 1 is at r1 and 2 is at (t2, r2). The system may equally be represented by ɸ21(t2, r2; t1, r1) from the vantage of porticle 2, with amplitudes Γ21 and γ21. While the perspective in this approach is inherently non-inertial, an inertial perspective may be achieved by transforming quantities to a COM reference frame. Classic measurements remain problematic in this MTi version, as there is no capable observer.

For systems with N>2 porticles, link pairs may be correlated, such that CL amplitudes cannot be encoded using two-porticle Γojk and γojk, or Γjk and γjk. For N=3 porticles 1, 2 and 3, it is necessary to introduce correlated link-pair amplitudes Γo123 and γo123, or Γ123 and γ123. Information regarding links not involving the observer is found in Γo123, but not γo123. Similarly, information regarding links not involving porticle 1 is in Γ123 but not γ123. The corresponding partial MTi wavefunction is represented by ɸ123(t1, r1; t2, r2; t3, r3). The system may equivalently be represented by ɸ213 or ɸ312, from the perspectives of 2 or 3.

What are the functional forms of the link-pair amplitudes and wavefunctions in MTT? The explicit functional dependence ultimately derives from the MT equations of motion, which have not yet been identified. However, it is still possible to explore features expected of actual solutions, and to tentatively consider quasiphysical functions which embody these features. Consider then an MTe system consisting of an observer plus a single porticle #1. Let γo1 (tooa, toob, θoaob, uoaob; tooh, tood, θocod, uocod; to1a, t1oa, θo1a, uo1a; to1b, t1ob, θo1b, uo1b) be the truncated link-pair amplitude covering both o-o and o-1 links, and ɸo1(to, ro; t1, r1) the associated partial wavefunction. All quantities are defined from the observer perspective.

It is useful to express both γo1 and ɸo1 as products γo1 = γfree γint and ɸo1 = ɸfree ɸint of free-porticle and interaction terms. In the weak (free-porticle) interaction limit, we expect

ɸfree ∼ Ψfree ∼ e-iωoto+iko·xo e-iω1t1+ik1·x1

where (ωo,ko) and (ω1,k1) correspond to (energy, momentum) of the observer and porticle, respectively. Here ℏ2ω2 = ℏ2k2c2 + m2c4 separately for the observer and porticle, if m is invariant rest mass.

This free-porticle behavior in ɸfree can be reproduced by setting

γfree ∼ e-0.5iωotoaob+0.5iko·xoaob e-0.5iωotocod+0.5iko·xocod

e-0.5iω1t1oa+0.5ik1·xo1a e-0.5iω1t1ob+0.5ik1·xo1b

such that γfree is a product of four factors, one for each link, and each factor is in turn the product of an exponential temporal and an exponential spatial frequency term. The effective respective time variables are toaob=0.5(tooa+toob), tocod=0.5(tooc+tood), t1oa and t1ob, while the space variables are xoaob=0.5c (toob-tooa) uoaob, xocod=0.5c (tood-tooc) uocod, xo1a=c (t1oa-to1a) uo1a and xo1b=c (t1ob-to1b) uo1b. Here xo1 is a 3D distance vector from the observer to porticle 1, defined separately for links o1a and o1b at t1o.

The link frequencies corresponding to (ωo,ko) and (ω1,k1) can be interpreted as properties of the observer and porticle 1, respectively, and not quantities transferred by CLs. This is consistent with minimal interactions. Yet interactions between the observer and itself, or the observer and porticle 1, may cause information transfer along links, and determine γint.

Consider such a link pair between the observer and porticle 1. Define δo1 ≡ θo1 (t1o-to1), the forward time displacement between the observer and porticle 1 along link o1a or o1b. Let ωo1>0 be the temporal frequency associated with energy transfered along δo1, and ko1 the wavevector associated with linear momentum transfered in the same direction. Mathematically, a summation ∑ωe-iωt over a range of ω peaks at t∼0. If interactions correlate to and t1, then |γint| should peak at some δo1 ∼ d1/c, where d1 is a 3D distance vector that is in general a function of t1. If xo1 is bounded, |γint| should also peak at xo1∼d1. The functional dependence of γint can then be approximated by a product of two 4D sums

γint ∼ ∑ωo1ae-iωo1a(δo1a-d1/c) ∑ko1ae+iko1a·(xo1a-d1) ∑ωo1be-iωo1b(δo1b-d1/c) ∑ko1be+iko1b·(xo1b-d1)

over a range of (ωo1a,ko1a) and (ωo1b,ko1b) for links o1a and o1b, respectively.

What is the functional behavior of the corresponding ɸint(to, xo; t1, x1)? Define a time correlation parameter εo1=(t1-to) and an associated vector εo1=εo1(x1/x1), where xo1=x1+θo1cεo1 when to1a=to-x1/c, to1b=to+x1/c, and t1oa=t1ob=t1. Then

ɸint ∼ ∑ωo1ae-iωo1a(cεo1+x1-d1)/c) ∑ko1ae+iko1a·(cεo1a+x1-d1) ∑ωo1be-iωo1b(-cεo1+x1-d1)/c) ∑ko1be+iko1b·(-cεo1a+x1-d1) .

This function peaks at εo1∼(d1-x1)/c and εo1∼(d1-x1)/c for link o1a, but εo1∼(x1-d1)/c and εo1∼(x1-d1)/c for link o1b, or overall at t1∼to and x1∼d1, as expected with interactions.

Consider now an observer interactive self-link pair. Define δoaob=θoaob(toob-tooa) and δocod=θocod(tood-tooh), the forward time displacement along observer self-links oa-ob and oc-od, respectively. Let ωoo be the temporal frequency associated with energy transferred along δoo, and koo the wavevector associated with linear momentum transfered in the same direction, for each link. Suppose that the functional dependence of γint with respect to observer self-links can be approximated by a product of two 4D sums

γint ∼ ∑ωoaobe-iωoaobδoaob ∑koaobe+ikoaob·xoaob ∑ωocode-iωocodδocod ∑kocode+ikocod·xocod

over a range of (ωoaob,koaob) and (ωocod,kocod) defined separately for each self-link oa-ob and oc-od. The corresponding ɸint peaks at xo~0, corresponding to the observer seeing itself localized at the origin of the observer coordinate system.

By using generalized link amplitudes that include both free-porticle and interaction terms, partial wavefunctions ɸmt can be made equivalent to the complete wavefunction Ψmt. To the extent they define this wavefunction, links essentially define timespace itself. When interactions are considered, γo1 includes dependence on (ωoaob,koaob), (ωocod,kocod), (ωo1a,ko1a), and (ωo1b,ko1b), in addition to (ωo,ko; ω1,k1). The link-pair probability per dependent quantity is again |γo1|2.

[Fleegello unnecessarily restricted each CL to the transfer of a single quantum of information. A link may actually transfer any number of information packets, each a superposition of frequency (or other) states. A link amplitude must specify the number of packets, as well the link type (PCL or RCL) and the state superposition distribution associated with each. Amplitudes may alternatively be reformulated in a way resembling QFT, to specify the number of quanta occupying each of a set of link states.]

Return to link pairs o1a and o1b between the observer and porticle 1. What are the restrictions on transferred quantities, if energy and momentum are conserved at every link vertex? In the case of RCLs (e.g., an observer may scatter a real photon from porticle 1 to see where and when it is), then only to1a=t01b (link pairs defining the wavefunction ɸint) is allowed if porticle 1 is elementary. Quantities ωo1 and ko1 must further satisfy the standard relation between temporal frequency, wavenumber, and rest mass of the link porticle along each link.

For PCLs, energy-momentum conservation requires that the effective link mass for a solitary link be imaginary, if porticle 1 is elementary. This is allowed, as PCLs are transient, and represent only the effect of a force field, not actual porticles. Although information moves at light speed, the relation between ωo1 and ko1 is not constrained. Because a negative frequency ωo1 moving in the positive time direction is equivalent to a positive frequency moving in the opposite θo1 direction, physically meaningful ωo1 can be restricted to positive values.

Momentum wavevectors ko1 may in general contribute to ɸint even when they point in directions other than uo1=θo1x1/x1. Whereas the average value of ko1 should be collinear with uo1 for RCLs, this restriction does not apply to PCLs. The quantity ko1 presumably contributes to changes in the velocity of porticle 1 seen by the observer. Define k+o1 to be the component of ko1 along uo1, with k+o1=k+o1uo1. The philosopher Puuliin has suggested that forward wavenumbers k+o1uo1 must be positive if an interaction is repulsive, and negative if it is attractive. k+o1uo1 respectively points in the same direction as uo1, or in the opposite direction.

How closely can the proposed product of two 4D sums of frequency eigenfunctions in γint correlate to with t1, and x1 with d1? In addition to the time correlation parameter εo1, define a 3D distance correlation vector ηo1=x1-d1 at (to,t1). In the classical limit, to and t1 can be perfectly synchronized, and a unique 3D distance d1(t1) exists at t1 . The quantities εo1 and ηo1 are then both zero with 100% probability. The corresponding γint can be approximated by a maximal summation over (ωo1a,ko1a) and (ωo1b,ko1b) eigenfunctions. In the classical extreme, the sum is over discrete values of ωo1 from 0 to +∞, and each component of ko1 from -∞ to +∞ (including zero), at increments Δωo1 and Δko1, in the limit Δωo1→0 and Δko1→0. Each phase factor in the sum is multiplied by the four-dimensional product of the increments.

[This sum is comparable to the product δ(ε) δ3(η) of four Draci delta functions, where δ(x) is defined by δ(x) = 0 for x≠0, and δ(0)=∞ such that the area under δ(x) is unity. δ3 is the product of three ordinary delta functions, one for each spatial component of η.]

Can such a link amplitude be realized in our physical world? RCLs and PCLs actually have distinct minimum and maximum allowed absolute values (ωmin, ωmax) of angular frequency and (kmin, kmax) of wavenumber in any superposition of simple states.

For real massless bosons, an RCL travel distance d1 defines a limiting maximum wavelength λmax=2d1, entailing a minimum frequency ωmin=𝜋c/d1 and wavenumber kmin=𝜋/d1. Limiting maximum values of frequency and wavenumber are defined only by the (inverse) smallest meaningful size of a timespace interval, and so are presumably huge but finite.